*Updated with Singapore launch information*

When Google first introduced Gemini, the focus was largely on capability. It was about speed, multimodality, and how well an AI system could reason across text, images, video and code. With the rollout of Personal Intelligence, Google is signalling a different ambition. The goal is no longer just to answer questions well, but to understand the individual asking them.

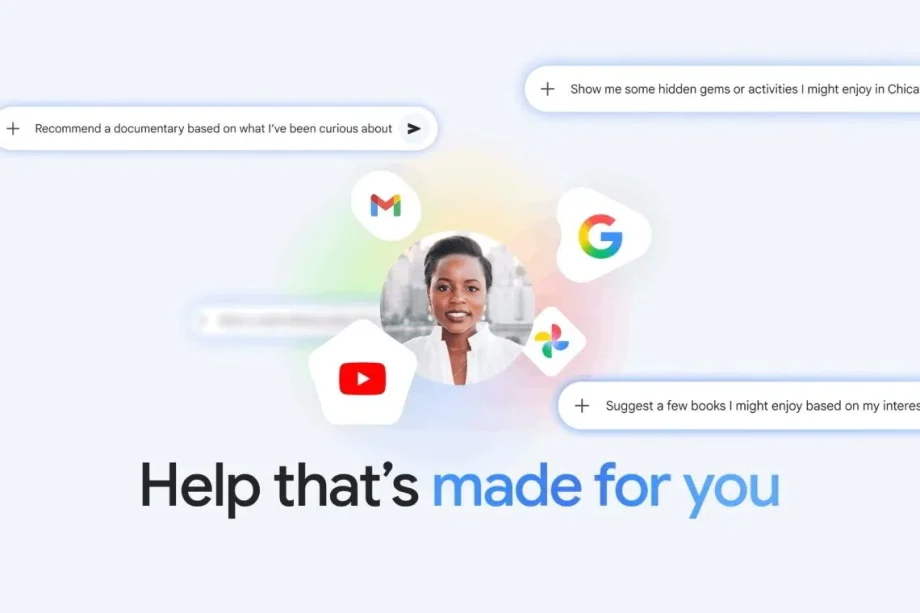

Personal Intelligence reframes Gemini not as a generic assistant, but as a system that can respond with personal context in mind. Instead of starting every interaction from zero, Gemini can now draw on a user’s own data, with permission, to provide answers that are more relevant, timely and grounded in real life. Google describes this as moving from helpful AI to AI that feels genuinely personal.

What Personal Intelligence actually means in practice

Photo: Google

At its core, Personal Intelligence allows Gemini to connect with a user’s Google services such as Gmail, Search history, YouTube, Photos and Google Drive. This means Gemini can answer questions that previously required manual searching or memory.

For example, a user can ask Gemini to summarise unread emails related to an upcoming trip, find photos from a specific holiday, or recall a video they watched weeks ago but cannot remember the title of. Instead of offering generic advice, Gemini can tailor responses based on what the user has actually done, watched or written

Google positions this as reducing cognitive load. Users no longer have to remember where information lives. Gemini does the connecting in the background, surfacing what matters at the right moment.